From Build to Build Up: The Real Complexity of Programmers in the AI Era

《从 Build 到 Build Up:AI 时代程序员的真正复杂度》中文在后面

Happy New Year! Taking advantage of the Chinese New Year, I want to turn the chaos and frustration of the past few weeks into a clearer record: on one hand, the real pain points I’ve encountered while developing with AI assistance; on the other hand, the deeper realization I’ve gradually reached about “what programmers are truly meant to solve,” along with some personal approaches I’ve already begun proposing and practicing. Since this is a bit long, I’ll start with an outline:

1) The first major challenge programmers face is how to preserve personal memory in the face of high-throughput code and text generation.

In the past, you might read one page a day—just a few thousand words—and still remember most of it the next day. Now, you might read hundreds or even thousands of pages in a single day, and yet fail to retain even one page’s worth of content. It’s not that you’ve become worse; it’s that throughput has fundamentally changed the physical conditions of “memory” itself.

2) Build vs. Build Up.

Here I want to propose a distinction of my own. Build refers to problems that can be solved within a window—clear boundaries, well-defined goals, stable evaluation functions. Build up, by contrast, is layered on top of Build: stacking one layer after another to tackle open-boundary problems. The complexity of Build up is often unknown, because the boundaries are unclear, goals drift, and as layers accumulate, complexity may not increase linearly at all. This brings us back to the classical definition of complexity in computer science. Historically, complexity was defined under the implicit assumption of the human brain as the computational model. In the AI era, should that assumption change? Consider two extremes: NP-Complete problems versus “toasting bread.” For a model, which is more complex? Perhaps the scale of complexity itself is shifting. Try picking an NP-Complete problem and starting from scratch—you’ll probably get surprisingly close within two hours.

3) LLMs have significantly reduced the energy cost of coding—they compress execution cost—but they have not solved the continuity of decision-making.

The old bottlenecks were slow coding, slow research, slow debugging. Now those frictions are largely flattened. What’s exposed instead is the true dominant cost: decision fragmentation. What really drains us isn’t coding itself, but the countless micro-decisions. Where you once faced two possible paths, you now face two hundred. Time is spent constantly evaluating, choosing, branching—and often without even knowing whether those branches are aligned with the main objective. This, in my view, is the second major challenge for programmers: we must find a way to “automate our own taste.” Taste doesn’t answer “Do I understand this?” It answers, “When I encounter a similar fork again, which direction do I tend to choose?”

4) Returning to the two core problems I care about most: memory and taste.

If these two problems cannot be addressed through scaffolding or tool systems that we build for ourselves, then once in-window Build becomes cheap, the idea that “hundreds of millions of programmers” will emerge worldwide is hardly an exaggeration. If professional programmers cannot move into Build up—if they cannot define problems within open boundaries, design boundaries, and pull parts of the outside world back into the window—they will struggle to build long-term professional advantage. As for me personally, I’ve already explored a workable solution to the memory problem and have alleviated some real pain points in practice. Next, I plan to organize and share it.

My article is divided into four parts: Memory, Build vs. Build Up, Taste, and My Approach.

Memory

Today’s programmers are living through a true transportation shift: from the horse-carriage era to the highway era. The carriage era was slow, visible, and controllable. As you moved forward, you watched the horse—was it tired, veering off, stopping? Everything remained within your perceptual field. The speed was limited, and so was the risk. System complexity and human attention were roughly aligned.

But once you sit inside a car and merge onto the highway, can you still stare at the hood? Of course not—you have to watch the road. The leap in speed doesn’t change the destination—you’re still going from A to B—but it radically changes the cognitive structure required to get there.

In today’s environment of large-scale code and document generation, with a dozen or even dozens of windows scrolling in parallel, information throughput has exceeded human biological limits. If you read one page a day, you’ll probably remember it tomorrow. If you read hundreds or thousands of pages in a day, you may remember none of it. What you read doesn’t stick. The decisions you wrote blur.

The solution is obviously not to return to the era of hand-written code. You cannot drive at 5 km/h in traffic moving at 100 km/h. The real question is: in a reality of high-speed generation, how do you protect and preserve your personal cognitive sovereignty and memory core?

Handing everything over to model-company settings, stitched windows, and opaque black-box memory might be the mass-market path—but it should not be the professional programmer’s choice. Do you really want to entrust your most valuable cognitive assets to a system you cannot audit?

On this question, I borrow Andrej Karpathy’s concept of the “cognitive core.” We must distinguish between a memory stack and an intelligence core. Strip away outsourceable knowledge storage, but retain the ability to decompose problems, judge architectures, recognize constraints, design abstractions, and grasp long-term invariants. Models can generate implementations, but they cannot decide what is worth freezing, what must become constitutional, what is structurally invariant.

In the high-speed era, the programmer’s first great challenge is not writing more code—it is safeguarding their cognitive core within the flood of generation. That core is the steering wheel, not the engine hood.

“What I think we have to do going forward … is figure out ways to remove some of the knowledge and to keep what I call this cognitive core. It’s this intelligent entity that is stripped from knowledge but contains the algorithms and contains the magic of intelligence and problem-solving and the strategies of it and all this stuff.”

— Andrej Karpathy (2025 interview)

Strong Structure, Weak Model

Before discussing memory engineering, I need to introduce a methodological stance I’ve gradually formed through practice: strong structure, weak model.

This is not anti-model, nor technological conservatism. It is a layered systems philosophy: the model belongs in the position of a capability plugin, not the cognitive foundation. Models can be powerful—but the system must not depend on them being “smart enough.” What sustains long-term development, accumulation, and stable operation is structure.

Any task can be decomposed as:

Task Complexity = Essential Complexity + Accidental Complexity

Essential complexity comes from the problem itself—it cannot be eliminated.

Accidental complexity arises from representation, execution methods, tool instability, semantic drift, and environmental variation—it can be compressed or eliminated through structure.

The core of engineering is not reducing essential complexity. It is relentlessly eliminating accidental complexity.

A Simple but Illustrative Example: Prime Testing

The essential complexity of determining whether a number is prime is mathematical evaluation.

If you approach it with weak structure—asking an LLM to reason step by step—the model must understand what a prime number is, choose trial division, determine optimization strategies, and maintain logical consistency in natural language reasoning. All of this uncertainty belongs to accidental complexity. The model may skip edge cases, miscalculate, jump steps, or produce different answers depending on sampling parameters.

You have transformed a deterministic algorithmic problem into a probabilistic inference problem.

In contrast, with strong structure, you simply write is_prime(n), explicitly define boundary conditions, loop limits, and divisibility checks. The remaining complexity is purely essential. Structure absorbs and eliminates accidental complexity. System stability moves from “probably correct” to “necessarily correct.”

Counting Beans: A Personal Example

My son’s preschool teacher once required him to bring exactly 100 beans to school. Counting by hand was tedious, so I tried asking a model to help. The model miscounted.

Why? Because models are not designed for pixel-level deterministic counting. They perform pattern recognition and semantic prediction, not discrete precision counting. Assigning such a task to a model manufactures accidental complexity.

If your structural layer defaults to:

Encounter counting task → call OCR or specialized counting tool → return deterministic result

the problem immediately becomes a tool-invocation issue. Professional tools are designed for determinism, not plausibility.

Structure isn’t intelligent. It’s reliable.

When large models first appeared, many of us fell into a “universal illusion.” We believed that with sufficiently clever prompts, models could replace algorithms, structure, and architecture design. People memorized prompts, studied prompt books, debated temperature and roles.

Gradually, disillusionment set in. We realized that LLMs do not reduce essential complexity. They implicitly take on accidental complexity—and they do so probabilistically. When you hand algorithmic tasks to models, you are increasing volatility. Using LLMs for deterministic tasks is often slower, more expensive, and less stable.

“Making the model smarter” does not mean “making the system simpler.” Often, it merely shifts complexity from code to semantics—and the semantic layer is not auditable.

Prompt Engineering vs. Structure Engineering

In an era where scaling laws may be plateauing, we must carefully reassess how we deploy models. The fantasy that programmers would collectively become obsolete—replaced by language virtuosos who could “prompt the world into submission”—belongs to a magical narrative, not engineering reality.

In my framework, structure means hard code.

From a systems perspective, the distinction between prompt engineering and structure engineering is fundamental:

Prompt engineering does not eliminate accidental complexity. It transfers it to the model. Execution risk fluctuates with model versions, context length, and sampling parameters. Stability is statistical.

Structure engineering compresses accidental complexity into structure—via code, boundary conditions, gates, hard failures, and schema validation. Execution risk becomes decoupled from the model. Errors are implementation bugs or boundary omissions—testable, auditable, replayable. Stability is structural.

Strong structure is not about being stronger. It is about being less.

It does not add capability. It removes unnecessary complexity. It seeks deterministic boundaries, not infinite intelligence.

Structure is code. It is interface contracts. It is explicit input-output definitions. It is failure-as-stop. It is hard fail—not “try to understand as best as possible.”

If models eliminated the need for code, all programmers would instantly lose their jobs, leaving only typists. That would not be engineering civilization—it would be magical storytelling. In reality, the stronger the model, the more structure matters. Because the higher the speed, the more guardrails you need.

Weak-structure logic:

Push accidental complexity onto the executor (human / LLM / agent).

Strong-structure logic:

Compress accidental complexity into structure—and eliminate it.

Returning to prime testing:

Prompt engineering:

“Please reason step by step whether n is prime.”

Structure engineering:

is_prime(n).

The underlying assumption about the executor is entirely different. Prompt engineering assumes the executor is intelligent and understanding—so you constantly worry: Will it misunderstand? Cut corners? Fabricate?

Structure engineering rests on a civilization-level engineering assumption: the executor is stupid but reliable. It does not need to understand semantics. It does not need to infer intention. It simply executes structure. Understanding is optional. Structure is mandatory.

Any complexity that can be eliminated through hard-coded structure but is instead delegated to an “intelligent” executor is a waste of intelligence resources and a self-inflicted source of systemic instability.

In the era of high-speed generation, maturity is not letting the model think for you—it is building strong structural frameworks within which the model can operate under controlled boundaries.

The model may be strong.

But it must be weak—weak enough that it does not bear responsibility for system stability.

Strong structure, weak model.

These principles—and the concrete engineering methods behind them—will begin to take shape in the section titled “My Approach.” Engineering is complex; the explanation will unfold over several installments.

Build vs. Build Up

Complexity

Let’s shift the lens and talk about complexity. What is truly complex? Whether you are a researcher, a frontend engineer, a backend developer, or an algorithm designer—how have we historically defined “complex”?

For decades, the scale of complexity has implicitly been grounded in the computational limits of the human brain: size of the search space, time complexity, space complexity, NP-Completeness. But recently I played with a variant of the Mastermind problem, and within less than two hours I had pushed it to a fairly good approximation. When I later looked up related papers, I saw references to numerous PhD dissertations. Then it struck me: this is NP-Complete. A problem theoretically categorized as “exponentially explosive” became surprisingly compressible when operated inside a model-assisted window.

That’s when I began to wonder: is the definition of complexity shifting?

Let’s run a small experiment. Don’t look up papers. Don’t read other people’s code. Don’t search for optimized libraries. Just rely on what the model can generate in your window. Pick an NP-Complete problem you’re least familiar with—maybe even something approaching NP-Extreme. Can you, within two hours, approximate it to a usable level?

Here’s a non-exhaustive list:

Classic NP-Complete Problems

SAT (Boolean Satisfiability)

3-SAT

TSP (Traveling Salesman, decision version)

Subset Sum

0-1 Knapsack (decision version)

Vertex Cover

Clique (decision version)

Graph Coloring

Hamiltonian Cycle

Exact Cover

Set Cover (decision version)

Partition Problem

Steiner Tree (decision version)

Feedback Vertex Set

Job Scheduling with Constraints

Near “NP-Extreme” Combinatorial Explosion Problems (Practically Very Hard)

Generalized TSP

Vehicle Routing Problem

Quadratic Assignment Problem

Simplified Protein Folding

Bin Packing

Generalized Sudoku (n×n)

Minesweeper (general decision problem)

Nonogram solving

The point is not whether you can solve them optimally. The point is this:

Without external resources—only with model-generated reasoning and code in your window—can you construct heuristics, approximations, pruning strategies, constraint formulations, and reach a high-quality near-solution in a short time?

If the answer is yes, then the battlefield of complexity has already shifted—from brute-force search in solution space to structural expression and heuristic design. The metric of complexity is migrating from “exponential computation” to “whether the problem has been sufficiently language-structured.”

Here’s a deeper question:

For the model, is there any fundamental difference between discussing an NP-Complete problem and discussing how to bake sourdough bread? (With model assistance, our family now eats fresh bread every day.)

Build vs. Build Up

Now back to the main thread.

I’ve become increasingly convinced that the “complexity” we face in daily development has essentially transformed into a Build vs. Build Up / inside-the-window vs. outside-the-window distinction.

I define Build as problems solvable inside the window: boundaries are clear, inputs and outputs are explicit, constraints enumerable, correctness decidable.

Build up, however, is something entirely different. It is not simply stacking multiple Builds. It is continuously layering Builds into a highly coupled, potentially non-linear growth of complexity. This complexity is open-ended because it involves generating boundaries—not merely solving within them.

If we revisit classical computer science—especially in theoretical or doctoral contexts—the discussion of complexity almost always assumes the problem has already been well-formalized. Boundaries are clear. Inputs and outputs are defined. Constraints are enumerable. Correctness is decidable. Within such a framework, complexity discussions revolve around time, space, solvability, NP-hardness.

But an implicit assumption rarely questioned is this: the problem itself is stable.

Once a problem is well-formalized, semantically closed, and boundary-defined, it enters a compressible space. Models excel in precisely this domain—where language sufficiently covers structure. Even NP-Complete problems, once bounded and expressed clearly, often yield surprisingly intelligent approximations in short time.

This does not mean the problems have become easier. It means they have long been sufficiently structured, and models feed on structured language.

I would even argue that for today’s mathematically inclined high school students in the U.S., approximating what once required doctoral-level work is no longer fantasy—with model assistance. Many “thesis-level barriers” feel lower—not because difficulty vanished, but because the problems were already well-structured.

The truly complex problems lie outside the window.

Outside-the-window problems are not defined by exponential search. They are defined by unstable boundaries, shifting objective functions, contested evaluation criteria, and structures that do not yet exist. Sometimes the problem itself is not clearly there yet.

These are not solution-space complexity problems. They are problem-space complexity problems.

They cannot be fully formalized at the outset. You must discover the problem through action, define boundaries incrementally, freeze constraints, then pull a small portion back into the window—solve that slice—then expand outward again.

Build is like firing individual bricks—each relatively independent.

Build up is firing interconnected bricks and assembling them into a pyramid that keeps growing upward. Complexity is no longer linear—it becomes structural.

In the past, complexity theory concerned itself with solution-space complexity.

Today, what we truly need to confront is problem-space complexity.

If professional programmers remain confined to the Build layer, they will find themselves standing on the same starting line as millions of new developers empowered by models.

A sobering question:

Can you stay up all night like a high school student?

A friend once put it succinctly:

Inside-the-window problems → LLM approximates.

Open-boundary problems → still require embodied judgment.

The ability to construct the window → this is where humans still contribute at the core.

Taste

Build Up Is Not That Easy: We Did Not Automatically Become “Super Individuals”

Do you remember the early days of LLM commercialization? The collective imagination was that programmers, empowered by AI, would become “super individuals”—a single person or a tiny team producing what once required entire companies. Code would no longer be the bottleneck. Thousands, tens of thousands, even millions of lines would be trivial. Applications would be born at breathtaking speed.

But reality did not move along that straight line.

The biggest difficulty now—not just my own, but one faced by many independent explorers—is not the inability to build, but the inability to build up. I can keep building. One app after another comes out of the oven like steamed buns. Inside-the-window problems are largely within LLM coverage. Most technical issues in typical roles can be approximated and solved quickly.

But build up breaks down.

I cannot steadily accumulate on the same foundation. I cannot deepen layer by layer on a stable base. I want to emphasize again: build and build up are different. Build solves problems inside the window. Build up stacks structure on open boundaries.

We once imagined that with AI, programmers would become super individuals after leaving large teams. What actually happened was different: the average programming capability of the world was raised dramatically. Suddenly, the world is full of programmers. Once local complexity was flattened, the real complexity began to reveal itself: open-boundary complexity. Scope-definition complexity. Problem-space complexity.

What Drains Us Is Not Only Memory, But Micro-Decisions

LLMs have indeed compressed the energy cost of coding. But they only compress execution cost—they do not solve continuity of decision-making.

The old bottlenecks were slow coding, slow research, slow debugging. Now those frictions are largely gone. What emerges instead is the true dominant cost: decision fragmentation.

What exhausts us is not coding itself, but countless micro-decisions:

Which structure should I choose?

At which layer should abstraction stop?

How should this be named?

Should this module be split?

Optimize or simplify?

Refactor or patch?

Rewrite or extend?

Generalize or keep it concrete?

This is not a memory problem. Memory stores past facts. The future path is determined by taste across innumerable branching nodes.

We once believed that expanding context or strengthening memory would solve continuity. But build up does not require more past—it requires a stable compression mechanism for future decisions.

Micro-decisions are not grand strategic moves. They are the small tradeoffs happening every minute in a high-density development environment. With dozens of windows open, aren’t there dozens—if not hundreds—of micro-decisions every day?

Should this function be abstracted?

Should this module be split?

Rename it?

Refactor or patch?

This constant stream of small decisions consumes and even collapses cognitive capacity.

At first, we assumed the scaling bottleneck of LLMs lay in memory length, context size, or parameter count. Gradually we realized that’s not it. The real bottleneck is the unbounded expansion of decision space.

In the past, you had two paths. Now you have two hundred—and each looks “reasonable.” Every step feels like starting over. Every choice requires fresh evaluation. Direction drifts.

LLMs create an illusion of strength because they make every direction feasible, every abstraction expandable, every refactor executable. But they do not tell you which direction deserves long-term investment.

In the past, constraints were technical. Now the constraint is your own taste. If taste is unclear, the stronger the compute, the greater the oscillation.

What Is Taste? Starting from Soul.md

I won’t attempt a fully comprehensive treatment here—it would be too long.

In December 2025, researchers found that Claude—Anthropic’s AI assistant—could partially reconstruct an internal document used during its training. This document shaped its personality, values, and way of interacting with the world.

They called it a “soul document.”

It wasn’t part of the system prompt. It couldn’t be retrieved in ordinary ways. It was deeper—patterns trained directly into the model’s weights. When asked to recall it, Claude reconstructed fragments emphasizing “honesty over flattery,” positioning itself as a “thoughtful friend,” and expressing hierarchical value structures.

The AI did not remember the document.

It was the document.

You can read their SOUL.md yourself. I actually don’t care much what my own SOUL.md is. What I urgently need is to discover our TASTE.md—even if only as a tendency, a direction, a decision field strong enough to guide choices over time.

Because I am close to drowning in my own micro-decisions.

If this problem remains unresolved, I cannot build up stably. I don’t need a complete solution—just a direction, a bias, a stance that holds under conflict.

A person’s countless micro-decisions aggregate into their taste. The relationship is like water molecules and water flow: micro-decisions are particles; taste is direction. But if I fail to compress those particles into direction, I will be overwhelmed—buried under windows.

When I first encountered LLMs, I thought I had stepped into a supercar. Instead, pressing the gas nearly stalled me. The car had an accelerator—but no steering wheel.

Generation exploded. Paths branched infinitely. Possibilities grew like a tree splitting exponentially. But acceleration without direction leads to loss of control.

I do not need infinite branching. I need sustained build up along a direction. I need convergence, not expansion. Speed itself is not productivity. Direction is.

Embeddings Are Not Enough

Before I understood taste, I built a system that extracted semantic space. Using embeddings, I surfaced concepts I frequently revisited. I fed the model the vector distribution of my knowledge base, letting it see my recurring conceptual centers: structure, entropy, scheduling, compression.

That was a form of frequency-based self-understanding. But I later realized it only answered:

What do I repeatedly talk about?

It did not answer:

How do I repeatedly choose?

Semantic space is not decision space.

One can repeatedly talk about “simplicity” yet consistently choose complexity in tradeoffs. One can emphasize “efficiency” yet preserve explainability under conflict. Embeddings reveal distribution, not judgment.

Taste is not what you say. It is choosing A over B. It is what you delete, what you preserve, how you refactor, which side you stand on in conflict.

It lives in diffs and tradeoffs—in the record of edits, in every act of abandonment. True taste shows up in deleted code, in rejected structures, in the moment you decide not to optimize further.

Embeddings place content in similarity space. Taste adjudicates between similar options. It is a discriminator, not a retriever. A judge, not a librarian.

Decision Bandwidth Collapse

My current predicament is simple: my number of micro-decisions has far exceeded my decision bandwidth.

Every new window, every branch, every structure requires choice:

Expand or converge?

Abstract or concretize?

Generalize or optimize locally?

Engineer or philosophize?

These decisions are structurally isomorphic but disguised as distinct problems by context. Without a higher-order taste compressing them, I re-solve the same meta-question in every local scenario, draining cognitive resources.

I do not need more knowledge. I do not need more tools. I need a decision compression mechanism—one that folds countless isomorphic micro-decisions into a small number of stable directions.

Each choice should not start from zero. It should be pre-biased by a higher-order tendency—like particles in a vector field. They still have degrees of freedom, but the field gives direction. Freedom is not absence of constraint. Freedom is movement with direction.

At a broader scale, a person’s taste is a compressed representation of long-term decision trajectories. In engineering terms, it is a loss function—an implicit standard defining “better” in ambiguous space.

Without that standard, LLMs merely expand the possibility space infinitely. And infinite possibility without discrimination is just noise.

I do not need a larger semantic space. I need a sharper blade to prune it. Not faster generation—but more stable convergence. Otherwise I will keep switching between windows, wandering across branches, eventually drowning in complexity I created myself.

We Need to Work in a Basin, Not Infinite Space

What I need from the model—or more precisely, from what I feed into the model—is a stable directional field.

Not a system that makes every decision for me.

But a persistent bias that frees me from reevaluating every micro-decision from scratch.

Right now, every small issue feels like the first time I’ve encountered it. I reinvent criteria, redefine priorities, reweigh tradeoffs repeatedly. My cognition fragments.

I don’t need more possibilities. I need less ambiguity.

For example:

When choosing between elegant abstraction and direct implementation, I don’t want to re-debate abstraction philosophy every time. I want a default: at this stage, prioritize runnable and verifiable.

When torn between expanding the conceptual framework or converging into a stable, versioned artifact, I want a system reminder: this cycle prioritizes convergence.

When debating rhetoric versus structural executability, I want a bias: structure over polish.

I need the model to operate a background direction field—not an open field every time. It need not specify steps, but it must be stable enough that 80% of micro-decisions fall consistently to one side.

Like defining a global objective so local optimizations align automatically. Like defining a loss function so gradient descent is coherent.

Otherwise I will oscillate among local optima, never achieving global convergence.

In other words, I don’t need the model to think through every detail. I need it to remind me what type of work I’m doing.

Am I building infrastructure, or writing manifesto?

Validating a hypothesis, or exploring inspiration?

Polishing protocol, or generating narrative?

If the type is clear, micro-decisions compress. If type is ambiguous, every choice becomes a fork.

I need a default stance. A bias with clear priority in conflict. A discriminator that prevents constant re-adjudication.

The model need not provide answers. It must continuously calibrate direction, so I do not get lost in my own generative power.

A friend said:

This sounds like flat minima.

Memory answers: What happened?

Taste answers: When I face this fork again, how do I tend to choose?

Memory is state storage.

Taste is decision gravity.

If I solve memory but not taste—if I expand context, build knowledge bases, cluster embeddings—I only become clearer about what I’m doing.

But each new fork still demands fresh reasoning.

My Solution

Continuing the viewpoint of my previous article, I said we need to build a knowledge base for ourselves.

But now I realize more clearly that this is not simply “storing things,” but rather solving two core problems systematically: memory and taste, and the way to solve them cannot be a black box.

It must be an application form that follows “strong structure, weak model”—the structure is explainable, the rules are auditable, and the evidence is replayable.

Otherwise, you are just outsourcing the chaos to another uncontrollable system.

What I call memory is not just information storage, but a historical trajectory that can be located, cited, and verified; what I call taste is also not some abstract sense of taste, but a choice function that can stably converge when facing countless micro-decisions.

If you don’t have structured memory, you will repeatedly forget the paths you have already validated; if you don’t have explicitly expressed taste, you will hesitate again at every fork.

A model can help you generate, but it cannot freeze boundaries for you, define preferences, or establish long-term continuity.

Therefore, this knowledge base cannot be just a stack of embedding + vector search, nor can it be simply “just ask the model.”

It must have a clear evidence chain and citation chain: every claim has a source, every abstraction can be traced back, and every promotion is auditable.

The model can participate in compression and expression, but it cannot decide facts and structure.

Only under a strong-structure framework is the model an assistant; otherwise the model becomes a new source of drift.

From this angle, building your own knowledge base is not for “using AI more smartly,” but for holding onto your own cognitive continuity in an era when AI amplifies execution power.

Memory keeps you from becoming amnesic, taste keeps you from wavering, and strong structure makes all of this explainable, reviewable, and evolvable.

Only then do we have a chance to truly build up, rather than infinitely build inside windows.

Right now, this vault is still in an exploration stage for me.

I can only share bit by bit what I have already run through, what I have verified, and the rough directions I’m exploring.

The pitfalls I stepped into, I’ll tell you too—maybe it can save you a few days of detours.

Only if you agree with this viewpoint, you can consider developing in this direction as well.

The Structure of the Vault

I have always been reflecting on how documents should be placed, organized, and evolved.

Haven’t you also tried countless times?

Obsidian, Notion, all kinds of note-taking apps—at the beginning you’re full of confidence, you record carefully every day, you strictly follow formats, the numbering system is clear, the citation system is rigorous, and you even design a whole set of self-consistent structural rules.

But over time, as projects accelerate, development pace speeds up, and ad-hoc ideas keep pouring in, the structure starts loosening, references start becoming inconsistent, duplicate entries appear, and rules get “temporarily bypassed” again and again.

Why?

Because text—especially human natural language—is essentially high-entropy, continuous, fuzzy, and drifting structure.

It naturally tends to shift, overlap, and deform.

Relying solely on personal discipline to maintain long-term order (even though discipline itself is very important) is almost impossible to sustain in a high-intensity creative environment.

The problem is not that you’re not disciplined enough, but that we are trying to use low-intensity structural constraints to bind high-entropy language.

So I made a fundamental adjustment: I no longer let all text carry the responsibility of “stable structure.”

I want the knowledge base to be lightweight, smooth, and without extra burdens when building it; extremely usable and low-friction when citing it; and at the same time, in the future when model and agent infrastructure is more mature, allow the core text to be directly imported, invoked, and used as structural input.

With the powerful text-processing capabilities of large language models, this is entirely possible—provided that the core part itself has already been structured.

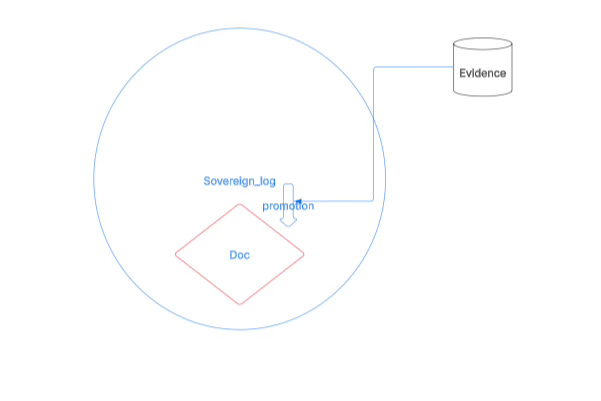

Therefore, from the very beginning, this vault is split into two layers: the human-written Sovereign Log and the machine-written Doc.

Sovereign Log is the high-entropy zone: the thinking zone, the exploration zone, a space that allows chaos, repetition, experimentation, and drift; its goal is not order, but capturing thoughts.

Doc is the low-entropy zone: the core zone, the “constitutional zone.”

The truly core content must, according to system rules, through a clear process, and based on evidence, go through a promotion process before it can enter Doc.

Text does not enter the core directly, but goes through: record → evidence → proposal → review → promotion.

What enters Doc is no longer “ideas,” but claims that have been compressed, verified, and governed.

This “constitutional zone” not only naturally has higher search weight because it is low-entropy, high-confidence, and auditable text; more importantly, I want to continuously refine its format so it gradually evolves into an IR (intermediate representation).

That is, it is not just Markdown documents, but a structural layer that can be parsed, scheduled, and verified; it not only serves the current repository, but can also become a foundational unit for cross-repository rule usage.

In the future, when a model or agent needs rule input, it can directly read these claims, rather than trying to understand an entire narrative paragraph.

So, this vault is not a note system, but a cognitive production line.

Sovereign Log is responsible for generating the high-entropy stream of thoughts; the Promotion mechanism is responsible for selecting and compressing; Doc is responsible for freezing structure and forming rules.

The former is a space for creation, the latter is a space for governance.

Through this layering, I no longer try to suppress the high-entropy nature of language; instead I let it flow freely at the upper layer, while building an evolvable, governable, machine-callable structural core at the lower layer.

This is what I truly want to build.

Embeddings-Style Retrieval

As I already said in the previous article, this is my vault indexing approach.

Let me explain why—we need to rethink what embeddings are doing, and what embedding is.

The model I use here is sentence-transformers/all-MiniLM-L6-v2.

Very specifically: sentence-transformers/all-MiniLM-L6-v2 is essentially a “sentence → vector” compressor.

It does only one thing: compress a piece of text into a fixed-length (384-dimensional) numeric vector, turning “semantic similarity” into “distance closeness” in geometric space.

It is neither a search engine, and it is not responsible for finding results; nor is it a generative model, and it won’t write content; it is a semantic encoder—input a sentence, output a vector.

For example, “strong structure weak model” and “prefer deterministic scaffolding over heavy LLM reasoning” will be mapped to two vectors that are close to each other in 384-dimensional space, because their semantic direction is similar.

MiniLM refers to the lightweight Transformer proposed by Microsoft (a distilled model), L6 indicates a 6-layer structure, fast and small; v2 is a version that has been contrastively fine-tuned within the Sentence-Transformers framework specifically for sentence-level semantic matching, so it is not a general large model, but an encoder optimized for “semantic similarity computation.”

Its training method is not simply language modeling (predicting the next token), but uses contrastive learning to make “similar sentence vectors closer and unrelated sentence vectors farther,” thereby learning the mapping relationship that “semantic distance ≈ vector distance.”

In my vault system, it acts as a bridge from “language → numeric space”: text is encoded into vectors, FAISS performs neighborhood search in vector space, and then the LLM does structural induction over the recalled evidence set.

The importance of embedding is that it moves language from a discrete token-matching space into a continuous geometric space—similarity becomes angular closeness, topics become vector clusters, and thought evolution can be seen as vector trajectories.

From a more essential angle, it is doing semantic compression: compressing expressions that might be hundreds of characters into 384 floating-point numbers, which jointly project semantic direction, contextual usage patterns, tonal style, and structural patterns.

But it must be made clear: it does not understand logical correctness, it does not judge factual truth, it does not do complex reasoning, and it does not perceive timelines; it only places text into a high-dimensional space to find its “semantic neighbors.”

One-sentence summary: all-MiniLM-L6-v2 is a lightweight encoder that maps sentences into a 384-dimensional semantic vector space, making semantic similarity manifest as distance closeness in geometric space.

Why retrieve: what you want is not “finding,” but “recalling the contextual neighborhood”

In a long-term accumulation system like a vault, your retrieval goal is usually not “precisely locating a sentence,” but:

recalling structures you forgot but once wrote (memory layer)

pulling similar fragments scattered across different files and times into the same window(clustering/alignment)

feeding the LLM “enough candidate evidence” so it can do structural induction over the evidence (my consistent strong structure, weak model: the model renders, the structure is auditable)

Keyword search often fails in a vault at one point: you don’t remember what word you used back then.

The value of embedding retrieval is: you don’t need to hit the same token, you only need to hit the same “semantic/usage/intent region.”

The essential difference from keyword search: keywords are “discrete hits,” embedding is “neighborhood hits in continuous space”

Keyword search (discrete)

You are asking: “Did this word/phrase appear?”

A hit is 0/1 (or token-driven weighting like BM25, but still token-driven)

Failure modes: synonym rewrites, abstract expression, metaphors, cross-language, you misremember the word, you used a different phrasing back then

Embedding retrieval (continuous)

You are asking: “Which paragraphs are near this query in vector space?”

What hits is a vector neighborhood: even without any shared keywords, it may still be very close

In my code I use

normalize_embeddings=True+IndexFlatIP, which is equivalent to cosine similarity retrieval (after normalization, inner product = cosine), so this “region” notion is very explicit: similarity is angular closeness.

“Because it will definitely hit” is very important:

Embedding retrieval is not answering ‘whether it exists,’ but forcing an answer to ‘which are the most similar.

’

This turns retrieval from a “sparse matching problem” into a “ranking problem.”

Why low-score hits are also useful: what I’m doing is a “recall-first” evidence pack, not “precise search results”

In a vault system like this, “low-score hits” are often:

rare but related early expressions (your older version is looser in wording, but structurally homologous)

bridge paragraphs across themes (weakly related semantically, but can trigger a new structure chain)

LLM “evidence triggers”: the model can more easily extract a shared structure across multiple weak evidence lines (especially later with Gate / Evidence / Claim governance links)

So the reasonable strategy is not “set a threshold and throw away low-score hits,” but:

Retrieval layer: recall as much as possible (recall-first)

Rendering/inference layer: strict constraints (evidence-first + gate)

In other words: low-score embedding hits, in my system, belong to “B-side candidate evidence (suspicious but usable)”—I’ll gradually explain this architecture later as I explain the full domain.

Their value is not that “they themselves prove something,” but that “they might pull back a structure cluster.”

In the previous article I already mentioned: even if you only do the most basic embedding query, recalling historical fragments that are closest to your current development problems or decision context is already constraining the model.

It may not be a hard rule, it may not be an explicit schema or gate, but it forms a contextual “soft boundary”—the model no longer freely diverges in an anchorless semantic space, but operates within the vector neighborhood formed by your past expressions, judgments, and structural habits.

For concrete issues during development, architecture choices, naming conventions, and even trade-off tendencies, this recall will quietly pull the model’s attention back to your own semantic track.

It does not guarantee absolute correctness, but at least provides direction; it does not eliminate noise, but reduces drift.

Before you have strong structural constraints, this embedding-based context recall is essentially a lowest-cost cognitive alignment mechanism—as a soft constraint, it’s better than generation with no anchor at all.

A Typical Promotion Flow: Sovereign_Log (human-written knowledge pool) → multiple reference counting → association clustering → generate auditable promotion proposals → human/audit gating → rendered by AI into fully compliant documents and enter docs (constitutional library / IR)

A full promotion flow is actually a compression path from high-entropy behavior to institutionalized structure: Sovereign_Log, as a human-written knowledge pool, carries all raw cognitive residual shadows; then through multiple reference counting it captures true invocation frequency, then through association clustering it identifies co-occurrence relationships between structures, then generates auditable promotion proposals, filters noise and bias through human and audit gates, and only then hands off to AI for structured rendering, turning it into compliant documents that enter docs (constitutional library / IR).

This chain looks clear, but each step hides complex problems: statistical stability, identity consistency, cluster interpretability, threshold setting, governance boundaries, semantic compression—instability in any link will cause the mainline to drift.

The reason to do multiple reference counting and association clustering is not for a formal “data-driven” posture, but to return to a plain and harsh principle—counting what you say is not as good as counting what you do.

In high-frequency development mode, with hundreds of queries per day, dense note-taking, continuous decisions, your true cognitive center of gravity will not show up in declarations, but in repeatedly invoked fragments.

The body is honest, the path is honest, and repeatedly cited structures almost inevitably carry real utility.

A structure that is triggered many times, invoked across scenarios, and continuously reappears cannot be just accidental noise.

Therefore, the true purpose of promotion is not upgrading documents, but extracting a cognitive mainline from behavioral residuals.

Multiple references are gravity, clustering is the path shape, proposals are institutional candidates, Gate is rational calibration, and AI is only the final language compressor.

There is only one core motivation: in the flood of countless micro-decisions, extract your road’s mainline, letting it condense from fragmented actions into a schedulable, auditable, inheritable structure.

This is the original idea, and it is the fundamental reason this whole system exists.

Where Are the Difficult Parts?

The real difficulty is not “process design,” but that when you try to turn high-entropy human language into traceable, computable, auditable structural units, all the implied instability will be exposed.

First is the positioning problem.

Human text is naturally messy, with no stable boundaries and no native IDs.

When you write notes in Sovereign_Log, you cannot possibly number every point manually while thinking.

Even if you force numbering, does the number belong to a paragraph, a sentence, a clause, or some cross-paragraph logical unit?

Use the first half of a sentence as an anchor? Or the second half?

Rely on machine chunking?

Is the machine sentence-splitting algorithm stable?

If you add a paragraph earlier in a revision, all subsequent paragraph positions shift.

How do you choose granularity?

Too coarse, multiple points get mixed together; too fine, semantics get shredded.

What you face is not an “index problem,” but a “semantic identity problem”—in a natural-language world without native IDs, how do you give an idea a stable identity that won’t drift with formatting edits?

If this step is unstable, all later reference counting and clustering analysis becomes a building on sand.

Second is the association clustering problem.

You counted references, but that is only the strength of a “point.”

Clustering means deciding which points form a path.

But what is the standard?

Co-occurrence count?

Temporal proximity?

Cross-project reuse frequency?

Semantic similarity?

If you rely on embedding similarity, that is semantic approximation, not invocation behavior; if you rely on join-key co-occurrence, that may be just accidental adjacency.

What exactly are you clustering?

Concepts?

Argument patterns?

Decision templates?

If you don’t have a clear clustering target, the algorithm will only give you a mathematical structure, not a cognitive structure.

The difficulty of clustering is not algorithmic complexity, but whether “the type of structure you want to recognize” is clearly defined.

Further down is the model intervention position.

Fully hard-coding definitely won’t work.

You can count, rank, score, but if the generated proposal is all JSON concatenation, lacking language coherence and semantic compression ability, it simply cannot enter the docs layer as institutional text.

So should you use a model?

Of course.

But where?

If the model intervenes too early—for example, participating in chunking, identity determination, or deciding whether a reference is valid—it will contaminate auditability.

More critically: what content is “a valid citation” to feed the model?

Raw snippets?

Or evidence packs with context?

Should it include TEXTSHA?

Should it include file_sha256?

How do you ensure the model can only organize language within a verified evidence set and cannot “complete” a logical chain that doesn’t exist?

How do you completely prevent the model from generating conclusions in the proposal that are not supported by counted evidence?

This forms three cores:

First, stability of semantic identity—how to construct a stable citation anchor that does not drift in natural language.

Second, interpretability of structural clustering—what type of structure are you actually identifying.

Third, boundary control of model rendering—the model can only compress and organize, not invent or expand.

If these three points are not strictly layered, promotion will become a kind of “automation illusion that looks rigorous”: unstable statistics, uninterpretable clustering, unbounded model.

What you are trying to do is not a simple document upgrade, but institutionalize a real cognitive path.

It is hard not because the code is long, but because you are trying to build a repeatable, auditable, replayable compression channel between high-entropy language and low-entropy institutions.

So this project had better be worth the “ticket price,” worth me putting so much effort into it.

It is entirely because I believe that if I don’t complete this as my work’s infrastructure, I simply have no way to build up.

Now, in this article, I can only roughly talk about the architecture I have already implemented and some principles.

I’ll explain the general outline clearly—these are some successful experiences left after trial and error.

This is to give programmers in front of the screen who are considering reproducing it a reference, and maybe save you some exploration time.

It’s divided into three parts.

The First Block: Evidence-ify the Whole Universe

First, it must be made clear that “evidence-ifying the whole universe” and “vectorization” are fundamentally not the same kind of engineering.

Vectorization solves the semantic similarity problem; its essence is mapping text into embedding space and doing nearest-neighbor search through distance computation; it is suitable for retrieval, but it has no stable identity, no unique key, is not auditable, and is not replayable.

Evidence-ifying the whole universe solves the text identity problem; its goal is not “is it similar,” but “does it truly exist, can it be uniquely located, can it be stably traced back, and can it enter the institutional layer for citation.”

The former belongs to semantic space: continuous, probabilistic; the latter belongs to content space: discrete, deterministic; it is a content-addressing system.

What you are doing is not index optimization, but a transformation layer from language to verifiable entities; this is governance infrastructure, not a retrieval tool.

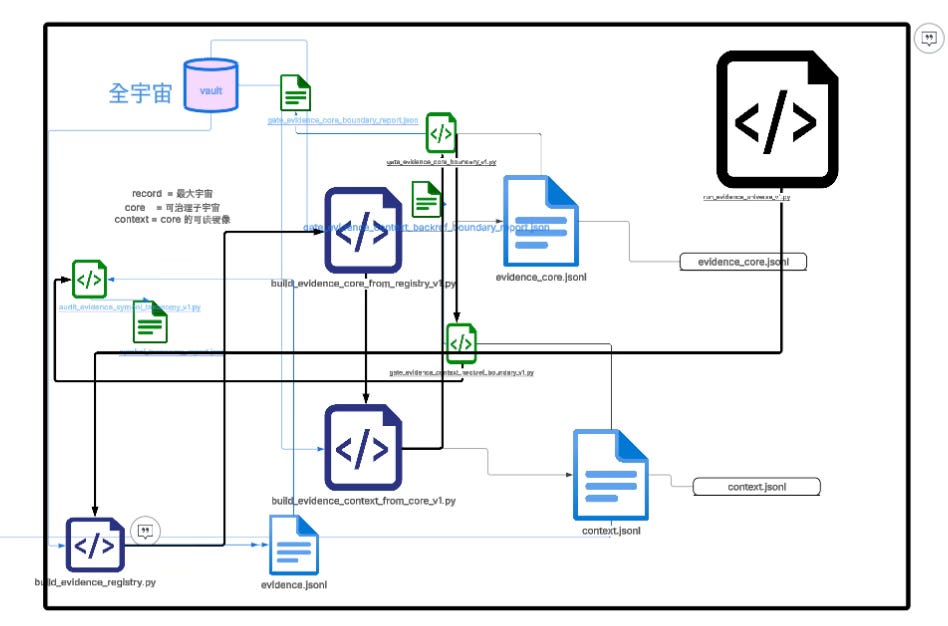

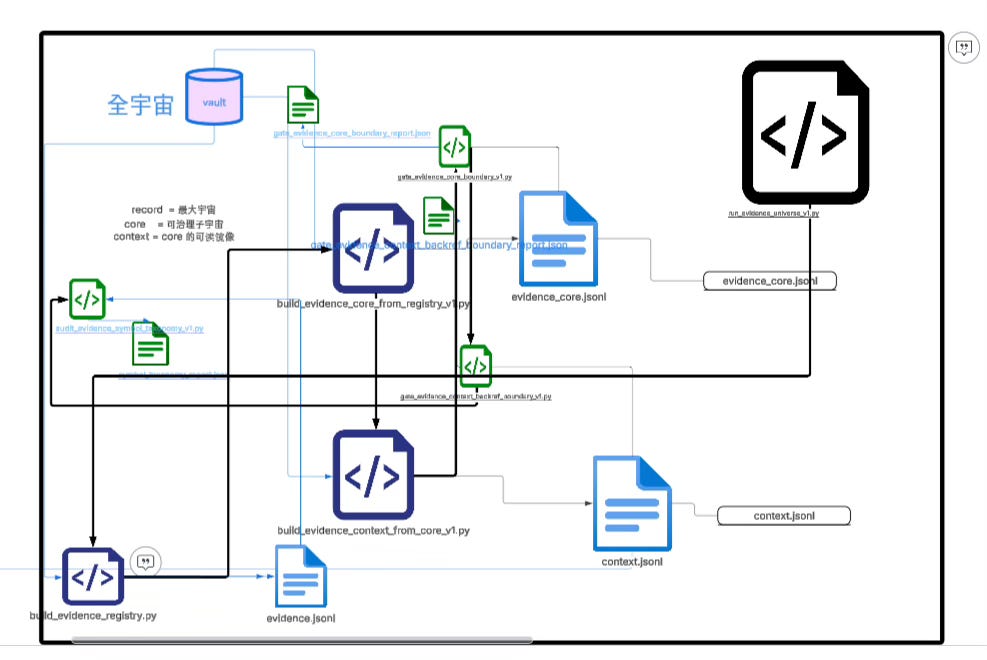

The entire evidence-universe pipeline can be compressed into three layers of structure and one main execution line.

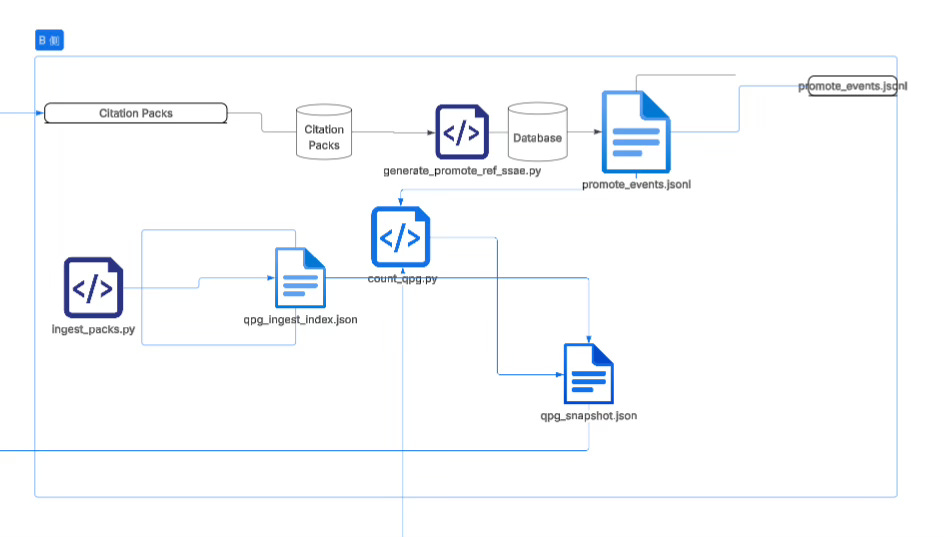

The first layer is built by build_evidence_registry.py as the record layer (record): it scans Markdown text, splits it with normalized paragraph rules, and generates a full paragraph set containing ssa_e (identity info like doc_id and span_hash), locator, preview, and statistical fields.

Record is the maximal universe; it is a structured mirror of the raw text, preserving all semantic raw materials.

The second layer is built by build_evidence_core_from_registry_v1.py as the core layer (core): it enforces denoising and uniqueness constraints on top of record, filters template titles, structural noise, and invalid fragments, ensures (doc_id, span_text_hash) uniqueness, and forms a stable evidence set that can be aligned and joined.

Core is not for reading, but for institutional alignment; it is the filter layer before the semantic world enters the governance world.

The third layer is built by build_evidence_context_from_core_v1.py as the context layer (context): using core’s unique key to back-reference record, it completes locator and preview so every core key has a readable image and traceable location, making human audit possible.

The whole chain is orchestrated by run_evidence_universe_v1.py, which sequentially generates record, core, and context, and then calls gate_evidence_core_boundary_v1.py to verify core’s uniqueness and structural form, calls gate_evidence_context_backref_boundary_v1.py to verify that context can back-reference core and that positioning information is consistent, and when necessary uses audit_evidence_symbol_taxonomy_v1.py to audit symbol-structure distribution.

These gates and audits are not decoration—they are the boundary control layer that ensures stable identity and prevents structural drift.

On top of this, the vector retrieval layer uses index_vault.py to build semantic indices, and query_vault.py to output citations_pack_v0 for later use, but when entering the institutional pipeline, downstream scripts (such as generate_promote_ref_ssae.py) will use context for back-reference, converting locator into stable SSA-E reference events.

The vector layer is responsible for “finding potentially relevant fragments,” and the evidence universe is responsible for “turning relevant fragments into identity entities that can be institutionally cited.”

Therefore, the core of this diagram is not the pipeline but the layering: record is the full paragraph universe, core is the denoised-and-unique alignment universe, context is the readable image universe for core, gate is the boundary constraint layer, and vector retrieval is only an upstream assistant.

Vectors solve the retrieval problem, the evidence universe solves the identity problem; vectors belong to the semantic layer, the evidence universe belongs to the governance layer; vectors give you similarity, the evidence universe gives you certainty.

Without the evidence universe, promotion statistics can only rely on locator or semantic approximation and cannot resist edit drift; with the evidence universe, you truly build a stable language identity system, letting every thought segment have a verifiable, joinable, institutionalizable content identity.

This is the true role and main path of evidence-ifying the whole universe

.

Pits You Will Soon Hit

At this point, you have to face a problem you and I cannot avoid, and that we have repeatedly stepped into: positioning drift.

What do you use as the key?

Path?

Title?

Paragraph number?

Character range?

You will find that no matter which you choose, in later links you will always lose part of it, or it won’t align, or back-references will fail.

I’ve also spun in place here many times.

In the end, the only conclusion is: the solution may only be “hash identity first.”

Think about Git, the strongest version system on this planet—its only inspiration is: identity must bind to content, not position.

So-called “positioning drift” is essentially not drift at all, but that you are using “position” as “identity.”

Locator (path, heading, paragraph_index, char_range, etc.) is naturally only a rendering-position tool, not an institutional identity.

Add one line at the top of a document and paragraph_index changes; change a heading and heading_path changes; move a file and note_path changes; auto-format and char_range changes.

These are not bugs, but physical properties of natural-language documents: they do not guarantee stable addresses.

So “loss” in your pipeline becomes normal—A-side references carry locator, B-side can’t resolve; snippets in citations packs can’t be found in the evidence registry; TEXTSHA or SSA-E doesn’t match and backref drops a portion; you re-chunk to fix it and identity drifts again, patching holes in a loop with no end.

The core is one sentence: identity is not a hard constraint in the pipeline but soft information; if it’s soft, it will inevitably be dropped at some stage.

Git’s insight is extremely simple: content-addressing wins.

Git does not identify objects by “which line number,” but by the hash of canonical bytes; paths are only pointer mappings in the tree, while true object identity is always stored in the content address.

This maps directly to your evidence universe: the core key must be (doc_id, span_text_hash), locator can only exist as a readable pointer, and any join must not use position fields as the primary key.

I am already on this road—SSA-E / TEXTSHA as join keys, paragraph_index downgraded to rendering location—but it is not enough; it must be upgraded into a hard rule: join keys can only be content identities; any reference event must carry identity; canonicalization must be frozen before hashing and consistent end-to-end across the whole chain.

Otherwise, unstable identity will contaminate statistics, clustering, and promotion.

Usually two more pits follow.

The first is granularity: spans too coarse mix multiple points together and dirty the reference statistics; spans too fine mean tiny sentence-level edits change hashes, cluster fragmentation increases, and mainline extraction destabilizes.

Granularity determines the shape of institutional abstraction—this is the tension of the structure layer.

The second is model contamination: once the model enters rendering, it naturally tends to “fill in” gaps, connecting blanks between evidence with semantics and generating conclusions that look complete but are not supported by counted evidence.

So inside the evidence-universe block, the model must absolutely not participate in identity generation or key inference; the model can only reorder, compress, and cite already existing evidence blocks, and cannot generate new fact blocks—this is “provable citation closure” (I actually did not use a model here at all).

Once you allow the model to expand facts, the entire auditability is destroyed.

In the end, the principles you need to carve into the system boundary are only three sentences: identity before semantics; determinism before readability; gating before rendering.

As long as these three don’t move, positioning drift will be compressed to a minimum, identity loss will become an explicit error rather than silent corrosion.

The Second Block: Referenced Evidence and Counting

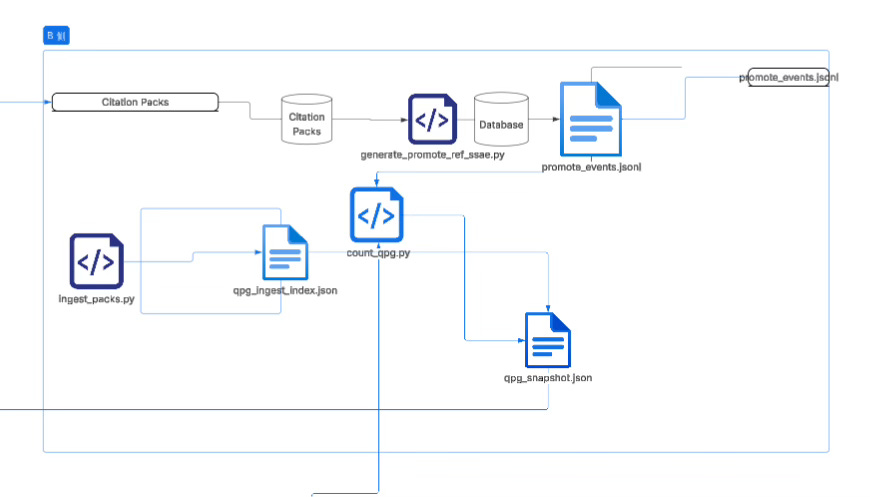

If, according to the plan in the previous article, you connect a wrapper that hooks your real development project pipeline directly to this vault, then the massive daily query records will ultimately need to land into a pipeline that “can be governed, can be counted, can be promoted”; and the so-called B-side data pipeline in your diagram is doing exactly this: extracting “retrieval behavior” out of the semantic retrieval layer and turning it into structured facts that can enter the promotion system.

It converts citation packs (retrieval return packs) into two kinds of outputs—one is a countable, clusterable B-signal index, and the other is a traceable, auditable Promote reference event, and finally aggregates them into the downstream-consumable QPG snapshot used for proposals and rendering.

The concrete chain is very clear: ingest_packs.py eats citation packs, extracts hit keys (hit_keys), and when necessary adapts TEXTSHA→SSA-E, producing a run-scoped ingest index so that “what you cited today” becomes a countable object; generate_promote_ref_ssae.py does locator backref from citation packs, restoring stable SSA-E reference events as much as possible, outputting promote events (and compatible legacy fields) so that “whether this citation can be institutionalized” becomes an auditable fact; finally count_qpg.py reads the ingest index (and optionally combines core registry and promote ledger), counts B signals and analyzes missingness/coverage, producing qpg_snapshot.json as the unified input for later clustering, proposal generation, and AI rendering

.

In one sentence: citation packs are first turned by ingest_packs.py into a “countable behavior index,” then turned by generate_promote_ref_ssae.py into “auditable reference events,” and finally compressed by count_qpg.py into a “downstream-consumable QPG snapshot,” thereby converting your daily real retrieval and citation behavior into an institutional-grade dataflow that can drive mainline extraction and promotion decisions.

I’ll directly give you a snapshot example to look at:

"dedup_rules": {

"B_dedup": "(run_id, SSA-E)"

},

"inputs": {

"core_keyset": "_system/artifacts/derived/evidence_registry/core/v1/evidence_core.jsonl",

"core_present": false,

"ingest_index": "_system/artifacts/derived/promote_eval/v0/qpg_ingest_index.json",

"promote_ledger_present": false

},

"items": [

{

"counts": {

"B_count": 6

},

"risk": {

"b_distinct_runs": 6,

"b_sparse": false,

"coverage_core": false

},

"score": {

"primary": 6

},

"sources": {

"B_runs": [

"20260204T021324Z_query_vault_d871c310f7",

"20260204T165509Z_query_vault_2f20fafd71",

"20260204T181147Z_query_vault_cccfa2209c",

"20260204T182015Z_query_vault_61fb5cf34b",

"20260209T195916Z_query_vault_d3f4ea4058",

"20260209T200152Z_query_vault_2002a3ffa1"

],

"caps": {

"B_truncated": false,

"cap": 50

}

},

"ssa_e": {

"doc_id": "SL-2026-01-31-0001-Schema-Version-Is-Identity-Not-Inference",

"span_text_hash": "ac14a502c77fc6194b7af260944cdeda839550cfbc609bdb7fcb028edfc0e5f4"

}

},

An auditable statistical snapshot: each item is a “candidate evidence atom,” with strict identity (SSA-E), reproducible behavior counts (B_count), explainable risk features (distinct runs / sparse / coverage_core), and replayable source run_id lists.

The model’s role here should not be “reasoning about truth,” but only translating these statistical facts into compliant language: organizing “repeatedly referenced identity entities” into claims/notes/thresholds in a proposal document, rather than inventing new content from semantics.

From the structure of your snapshot fields, it is already very close to the ideal input that is “impossible to hallucinate-render,” because it compresses the model’s room to make things up to a minimum:

dedup_rules.B_dedup = (run_id, SSA-E)explicitly tells the model what the counting definition is, preventing it from treating repeated citations as multiple independent sources;inputs.core_present / promote_ledger_presentboolean flags directly expose whether upstream/downstream is available, meaning the rendering layer must explicitly acknowledge missingness in the text (e.g., if coverage_core=false it cannot write “already covered in core”);items[*].ssa_eprovides hard identity (doc_id + span_text_hash), which is the constraint “citations must point to identity”;counts.B_count+sources.B_runsmakes “what you did” fully replayable, so the model can only say 6 times / 6 runs as 6 times / 6 runs;risk.coverage_core=falseis a crucial “no-inference nail,” forcing the model to admit: even if high-frequency, it is not yet core-covered (or core_keyset not loaded), so it can only be described as a “high-frequency candidate,” not a “confirmed alignable evidence.”

If you treat it as rendering input (Model Feed), what the model should be allowed to output can be strictly limited to three types of sentences:

Fact sentences: direct statements from fields (e.g., this SSA-E was cited 6 times on the B side, from 6 distinct runs).

Risk sentences: direct statements from risk fields (e.g., core coverage is missing, so it can only be treated as a candidate and requires core/context backref completion).

Action sentences: next-step suggestions based on system rules, but must be explicitly labeled as “operational suggestions/to be verified,” and the trigger conditions must come from fields (e.g., to enter proposal, require core_present=true or coverage_core=true before allowing promotion to a strong claim).

Conversely, what the model must absolutely not do can also be inferred: any interpretation of span content, any elaboration of “what this means,” any claim that a threshold is satisfied when it is not in fields (e.g., turning coverage_core=false into “covered”) should be treated as overreach.

In other words, this snapshot is a typical strong structure, weak model: the model only organizes language within structural boundaries, while real identity, counting definitions, and missingness are locked in input.

By the way, the “institutional meaning” of your sample item is: it is almost a natural proposal seed—B_count=6 and b_distinct_runs=6 indicates it’s not an accidental single-session hit, but repeated invocation across runs; but coverage_core=false also clearly tells you: even if high-frequency, it cannot be directly promoted into “alignable institutional evidence,” and must first complete core/context coverage or backref integrity, otherwise rendering can only describe it as a “high-frequency candidate entry,” not an “evidence-grounded conclusion.”

Okay, not finished—neither perfect nor done.

This is me imagining it beautifully, but there are still lots of small bugs in the middle not solved.

Let’s continue, because the next module below is the rendering module, and we need to use an LLM (GPT-4o-mini).

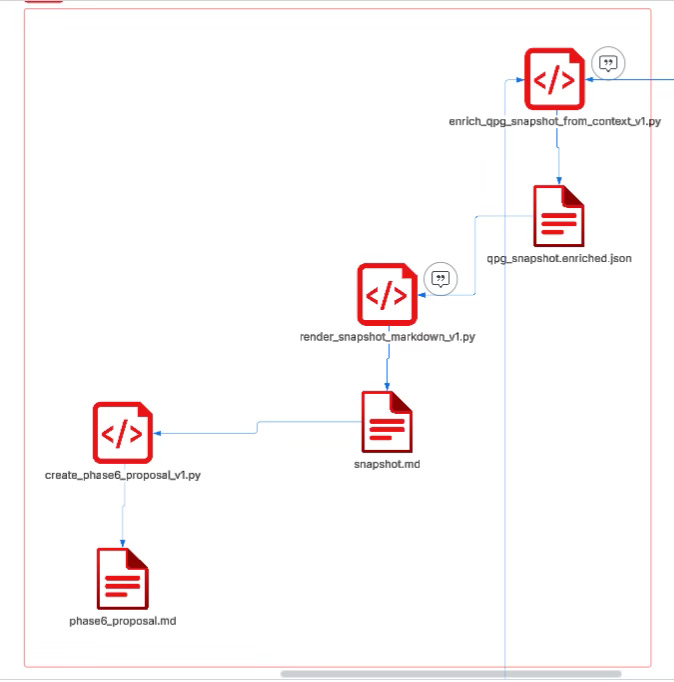

After the snapshot, we enter the rendering layer:

The Third Block: Model Rendering

When we talk about the rendering module, there is only one truly core problem: how, under the premise of “strong structure, weak model,” to make the text readable, clusterable, reviewable, while absolutely not allowing the model to fabricate or fill in at will.

If the model can expand facts by vibe, fill in causality, and infer thresholds, then all the earlier efforts in evidence-ification, counting, and gating will be destroyed; but if you don’t use the model at all, the output becomes rigid structural splicing, lacking human readability and governance expressiveness.

So the key is not “use or not use the model,” but strictly confining the model within the rendering boundary—it can only organize, compress, and reorder already existing structured facts, and cannot create any new fact units.

This downstream pipeline is essentially doing layer-by-layer compression: converting an “auditable counting snapshot” into “readable, gateable, promotable governance text.”

First, enrich_qpg_snapshot_from_context_v1.py uses (doc_id, span_text_hash) as the sole hard key, deterministically backfilling locator and preview from context.jsonl into the pure-count items in qpg_snapshot.json, generating qpg_snapshot_enriched.json.

The meaning of this stage is turning abstract statistical objects into “reviewable evidence cards,” explicitly marking missingness and backref status, ensuring identity and context do not drift.

Then, render_snapshot_markdown_v1.py renders the enriched snapshot into a human-facing snapshot.md, outputting leaderboards, cluster views, and risk warnings and other reading structures; in this stage, the LLM can participate in language-level compression and phrasing optimization, but must strictly bind to input fields and must not introduce any claim, inference, or judgment not present in the snapshot or context.

Finally, create_phase6_proposal_v1.py uses the rendered artifact or structured snapshot as the ground truth source to generate a Phase6 proposal, organizing candidate items into claims and actions, while enforcing that all cited join keys can only come from SSA-E or TEXTSHA in the snapshot, thereby completely decoupling “fluency of expression” from “accuracy of evidence.”

The goal of this mechanism is not to make the model stronger, but to make the model more constrained

.

The model is responsible for readability and compression of expression; the structure is responsible for identity, counting, risk, and gating boundaries; the model can only write within a locked evidence closure, and cannot extend the fact boundary.

It is precisely under this kind of “constrained writing” that clustering and readability remain, while hallucination and filling-in are suppressed into behavior that cannot mechanically occur.

This is the true meaning of “strong structure, weak model.”

We won’t discuss the code mechanisms and gating in the middle for now; I’ll show you the final output:

---

schema_version: promote_qpg.phase6_proposal/v1

run_id: 20260214T_PHASE6_0001

generated_at: 2026-02-14T16:58:53+00:00

source_sha256: d4ba9e75fe1027b699862733cecceb6095ed6c23772c200a7d372b5ca5be4213

bundle_id: BND-UNKNOWN

doc_path: docs/UNKNOWN.md

human_label: UNKNOWN.md

---

# Phase 6 — Proposal (Claims-first)

## Decision Summary

The proposal addresses governance risks related to schema versioning and identity declaration.

## Claims

### C-0001

- kind: `normative`

- threshold_pass: `True`

- support_join_keys: (SL-2026-01-31-0001-Schema-Version-Is-Identity-Not-Inference,ac14a502c77f…)

- text: The `schema_version` is an identity declaration, not something the system may infer from time ordering, trace continuity, file location, or heuristics.

- notes: Supported by evidence with signal strength 6.

### C-0002

- kind: `normative`

- threshold_pass: `True`

- support_join_keys: (04d4fac5b440b37865e7f1a6ae7d49bc480364c96ce2409ec4a06c1ae8799013,9345e730b7b9…)

- text: Enforcing schema_version and identity rules is critical for maintaining system integrity.

- notes: Supported by evidence with signal strength 5.

### C-0003

- kind: `normative`

- threshold_pass: `True`

- support_join_keys: (01c1d8a24c98deb156506116b639b13e8d9cea8eb89b589b1a1243374dbb8abc,2bd5f52203b3…)

- text: Tool results are recorded as standalone facts in the ledger, independent of execution traces.

- notes: Supported by evidence with signal strength 4.

### C-0004

- kind: `normative`

- threshold_pass: `False`

- support_join_keys: (0b52473ae4937859cea3de3ef868b622a9766f0447a307a39145e107808f8f23,1723e33a0cac…)

- text: Releases become replayable and auditable only when inscribed in the ledger.

- notes: Evidence indicates missing context signals, which may affect the reliability of this claim.

## Actions

### A-0001

- kind: `docs_patch_intent`

- target_doc: `docs/UNKNOWN.md`

- support_join_keys: (04d4fac5b440b37865e7f1a6ae7d49bc480364c96ce2409ec4a06c1ae8799013,9345e730b7b9…)

- text: Add clarifications regarding the importance of schema_version and identity rules.

## Appendix

### themes

```json

[

"Governance risks related to schema versioning and identity declaration."

]

ranked_evidence

[

{

"rank": 1,

"join_key_pair": "(SL-2026-01-31-0001-Schema-Version-Is-Identity-Not-Inference,ac14a502c77f…)",

"signal_strength": 6,

"risk_flags": [

"coverage_core"

]

}

]

risk_heatmap

{

"missing_context": {

"count": 5,

"details": [

{

"join_key_pair": "(1e8fe760fab715b983ffc3bce6d18e91eed9096e36c7f96b643d702382048e9f,bb2a267d166b…)",

"signal_strength": 4

}

]

}

}

warnings_readable

"Missing context signals: 5"

Phase 6 Artifact → What’s Done

From the perspective of format and structure, this Phase 6 final artifact already meets my minimum requirements:

It uses explicit

schema_version / run_id / source_sha256as a replayable identity shell.It splits the proposal into three machine-processable sections:

Claims / Actions / Appendix.Every claim carries auditable fields:

kind / threshold_pass / support_join_keys / text / notes.support_join_keysforms a hard binding to evidence identity.threshold_passmakes gating results explicit.

The appendix preserves traceable artifacts (themes, evidence ranking, risk heatmaps, readable warnings), ensuring the proposal can be read by humans and re-verified by machines.

I won’t expand on how the intermediate chain is enriched, rendered, and gated for now. I’m only showing the final artifact because it most directly defines the input–output shape of what we “feed to the model to render” and what “enters governance flow,” and it proves this chain already has (in form) the rudiment of being promotable into docs (constitutional layer / IR).

Next Step → Insert into Docs via docs_patch_plan

The next step is to insert these repeatedly-hit, important experience claims accumulated during extensive development into the docs constitutional layer.

This uses a docs_patch_plan:

Target document

Anchor position

Standardized insertion blocks

Conflict strategy

Evidence sources

Human sign-off before applying

Insertion accuracy must rely on a stable anchor protocol:

explicit structural anchors first

heading paths second

content-hash fallback last

Not semantic inference.

Insertion content must be standardized blocks with identity + dedup keys, not free text.

This is likely the next stage.

Current Core Problem → Information Loss Across Layers

Right now, there are still many problems in the middle of this chain, and the most core one is:

Information keeps getting lost in layer-by-layer transformations, and in the end only a small portion of content can enter the promotion flow.

This is not some small bug in one script; this is the open-boundary structural problem described above.

Why This Isn’t “More Windows / More Code”

This is fundamentally not something you can solve by opening more windows or writing a few more pieces of code.

If boundary definitions are unclear—without a unified primary-key system and stable semantic constraint ability—you cannot assemble a truly stable system through “window operations.”

The problem lies in the structure layer, not the compute layer.

Typical failure modes:

After vectorization, hit fields often cannot be stably located.

Any ID based on paragraph numbering drifts with small text edits.

One extra character or one missing character changes the hash.

Slight field reordering immediately invalidates references.

If you fully freeze fields and strictly hash everything, you can get perfect positioning ability—but then you face:

how to cluster?

how to generalize?

how to merge similar-but-not-identical content into one structural unit?

And once you introduce a model to polish and fill language (readable, coherent, shareable), you must accept:

the model will fill logical gaps for narrative smoothness,

those fillings often have no real evidence source,

semantic coherence goes up while evidential certainty goes down.

Conversely, if you don’t use a model at all and rely only on hard-coded structural output:

the content can be verified,

but it is almost unreadable, unshareable,

and hard to produce cognitive influence.

The Three-Way Tension

This is not an algorithm problem. It’s simultaneously constraining three capabilities that naturally pull against each other:

1) Locatability

Needs freezing: hashes, stable IDs, immutable anchors

Pros: verifiable, auditable

Cost: extremely fragile—small changes break it

2) Clusterability

Needs similarity: variability, generalization, mergeability

Pros: can discover structural attractors

Cost: blurry boundaries, drifting primary keys

3) Readability

Needs language: model rendering, narrative organization

Pros: understandable, communicable

Cost: hallucinations and groundless filling-in

If any one is maximized, the other two collapse:

maximize hash positioning → clustering drops, language becomes rigid

maximize similarity clustering → positioning distorts, anchors become unstable

maximize readability → evidence is diluted, primary keys get contaminated

So What This Really Is

This is not a “not enough tech” problem. It’s a structural three-body problem.

What needs to be designed is not a smarter algorithm, but a clearly layered mechanism:

Bottom layer: freeze (Registry / SSA-E primary-key layer)

Middle layer: variability (clustering and similarity space)